As much as we’d all like to think of ourselves as unbiased observers of reality, thorough research proves many times over that our judgments are inherently biased, regardless of our intentions. Explicit and implicit biases affect our thoughts about ourselves, interactions with other humans, our decisions, organizations, systems—even our ability to recognize biases is biased. Our entire world from the outside in has been baked in the presence of biases, and they so completely permeate our lives that it takes real intention and effort to even begin to operate outside of them.

Results of bias abound in venture capital, where women founders receive less funding than men, and Black and brown founders receive an even smaller share relative to their white counterparts.

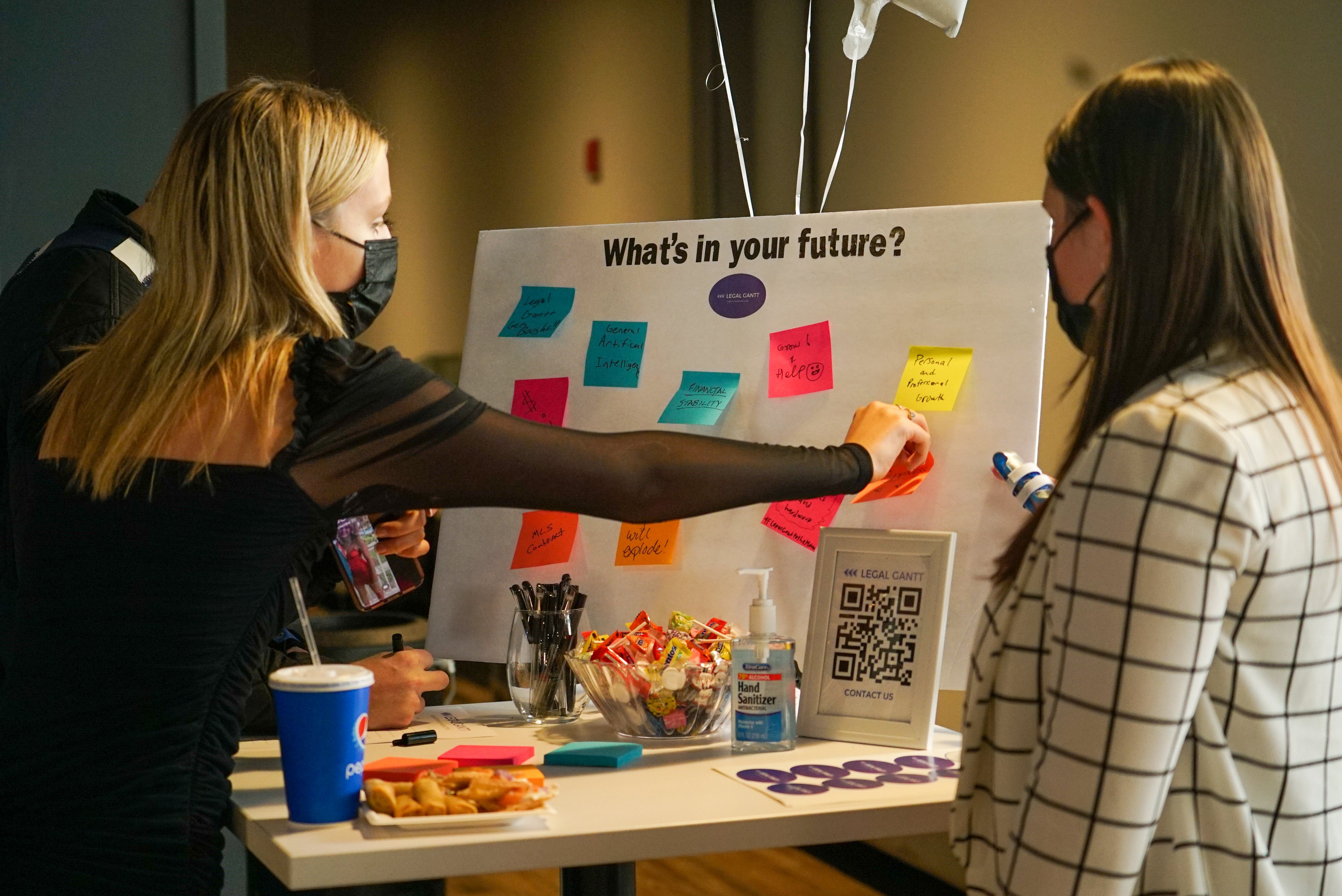

Render supports Access Ventures in running the Render Capital Competition, the premier startup investment competition in our region annually. In recent years, the competition has been the end cap and anchor for Louisville’s Startup Week, which has seen increasing momentum due to the dedication of its organizers to building and expanding Louisville’s startup ecosystem. One of the key performance drivers for this competition is signing eight SAFEs (simple agreement for future equity) with the most innovative, founder-diverse startups in the world. Being chosen as a winner from hundreds of applicants each year is contingent on founders moving to the region if they’re not here already–part of the competition’s purpose is to drive growth in our ecosystem by providing an influx of diverse entrepreneurs who want to seize the advantages our region presents: incredible concentration in the healthcare, manufacturing, and logistics industries, proximity to other major cities, and a thriving, growing, welcoming startup community.

Each year, Render casts a wide net for applicants and relies on a network of 50-60 volunteer experts to help us narrow the field from over 200 stage 1 applicants to the top 50 stage 2 applicants. Stage 1 judges evaluate each company on a scale from 1-5 on a series of factors, and those scores are averaged across judges to comprise a composite score. The top 50 composite scores moved on to phase 2 which is similarly scored by our volunteer judges to select the finalists. This method has served us well and has led to two cohorts of stellar companies and founders identified by tapping into the collective wisdom of the crowd–but could we do better? Crowds are made of individuals, and individuals are biased, no matter how we slice it–could we be missing out on founders due to factors beyond their track records and abilities to lead and scale a new venture into the future? The volume of applications we receive is high, making the possibility of missing an amazing startup amid the noise even more likely.

One of our core beliefs at Render is that we can reap major benefits from innovating and experimenting with new things, and we decided to apply this philosophy to the way we approached the first stage of the competition. Our goals were to minimize bias, accelerate the process, lighten the load on our fantastic judges, and eliminate an unintentional byproduct of our numerical scoring system–a snag we call the “tough judge” problem: if one judge is less forgiving in assigning a numerical score to the applications she’s assigned, those applications are at a disadvantage when compared to those applications that received more lenient judges by chance. This problem is not unaddressable by any means–we’d noticed it and applied several forms of score normalization to see which applications might have scores driven down by a tough judge, but it still didn’t get to the core of the problem, which was that each individual judge only sees a few applications out of the entire pool. It’s entirely possible that what looked to us like a “tough judge” was actually a very average score-giver who happened to be assigned the weakest applications.

And we weren’t the first to notice the inherent flaws of an empirical scoring system. MIT alumnus and startup co-founder Anish Athalye recognized the same snag. Humans happen to be especially terrible at assigning numerical scores–see Anish’s brilliant explanation here. What people are good at, though, is choosing the better of two options–a method called pairwise comparison. Anish’s rationale in creating the algorithm is a fascinating romp through social science, statistics, and computer science, but we’ll spare you the math here. The end result is Gavel, an open-source tool that enables judges to quickly rank order a large list of startup applications–or pitches, or really anything. Gavel has been used at Hackathons and competitions across the country.

This year, we used Gavel to narrow down over 200 startup applications from across the world to the top 50 in advance of our Competition. In an effort to minimize bias, identifying information was redacted from the applications. Those 50 moved on to stage two, where they received more thorough review and gained the benefit of comments and insight from our expert judges. Although it’s still in progress, we’re calling this experiment a success: Gavel allowed us to streamline our process, cutting our timeline down by about 4 weeks, and most importantly, this year’s field of applicants is the most diverse yet. We recommend Gavel as a tool for your next hackathon or competition, and although we didn’t use it this way, its speed and ease of use is especially suited to live events.

We’ll be celebrating our investment into this year’s cohort of startup winners on September 22nd in Louisville—come join us! You can register for the event here.